Most viewed posts

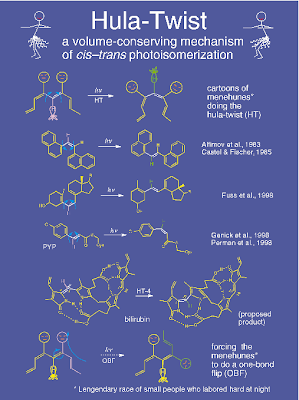

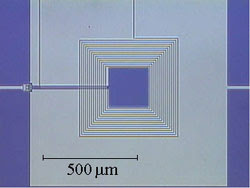

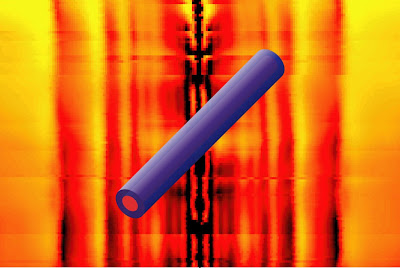

2009 is the year I started blogging and I thought it would be interesting to see which posts on this blog have attracted the most viewers, partly because I find sometimes the results of such an analysis surprising. Thanks to Google Analytics this is easy to find out. The top 10 posts (and number of views) are below: Am I HOMO- and LUMO-phobic? (476) Mind the gap (230) Spectral density of water (162) 20 key concepts in thermodynamics and condensed matter physics (132) Can we see visons? (107) How can living organisms be so highly ordered? (101) Walter Kauzmann: master of thermodynamics (98) James Bond meets Niels Bohr (96) Electron versus hole transport in molecular materials (92) Quantum coherence in photosynthesis (91) I found the results really encouraging: both the volume and that posts which I think were important and/or original and high on scientific content [except for 8.] attracted the most attention. I was surprised that some of the career advice and "better sci